By the time I finished writing this article, I realized that this is much more philosophical than mathematical. Anyway, I hope readers will enjoy it.

Nature of Reality

Before delving into the meaning and usefulness of the concept of random variables and stochastic processes in empirical modeling, it is very important to know the nature of reality and the way in which mathematics as a whole plays role in learning from data about this reality. Without knowing the nature of reality, our knowledge of reality is going to be incomplete and maybe incorrect as well.

So, what is the nature of reality? Nature and mathematics have different ontological history. Nature has a natural beginning. As of now, we know that everything (time, space and everything in it) started from the Big Bang around 14 billion years ago. Nature can be understood as a set of ordered events. Things cannot happen in a static time.

So what are these events? They are happenings or occurrences due to some known/unknown laws of physics. For example: rainfall, economic recession, formation of a star, a running horse, planetary motion and so on.

Pictures: Milky Way and New York Stock Exchange

In a given universe, trillions and trillions of events are happening every microsecond at a microscopic scale, medium scale and the scales of planets, solar systems and galaxies. As we observe them directly or indirectly, we observe them holistically in abstract level. We rarely learn about them in an experimental setting. We get a whole picture with infinitely complex mosaic and shape structures. Mathematics is nowhere observed in nature explicitly.

On the other hand, our senses are are designed (not by god though!) to understand hunting and survival circumstances and make basic inferences about the nature.

Events occur as if someone (god!) is tossing a complex coin and the events we see are heads and tails of that experiment. By knowing the distribution of these events we aim to know the nature of the coin, the way it is designed and tossed.

In this complex reality, how are we going to learn? The answer is mathematical simplification. Mathematicians have found a way to turn these abstract events into numbers. Events cannot be added or multiplied and put into simulation in computer. But we can sum, multiply and simulate the numbers. Numbers can be much easier to understand than pure events. Note that nature did not create numbers and symbols! We did. To facilitate our understanding of the events in this universe.

Random Variable

Random variable, quite counter-intuitively, is a function which projects events into real numbers such as $latex -1, \pi, 1215, 0.25, 10/3 $. Let us take the easiest events we know of: outcomes of a coin toss. When we, playing the role of God, toss an unbiased coin (assuming we can have an unbiased coin), we expect to get either of the two possible outcomes or events with equal probability: Head (H) or Tail (T). Obviously, even the god does not know exactly which event is going to turn around.

Now how does random variable help us here? Suppose X is a random variable and let us read it as “a coin toss”. We can never guess whether H or T is going to be the outcome/event resulting from a particular toss of a coin. Once the coin is tossed, the event will be either H or T. So a coin toss can potentially give rise to one of the two events. H and T are abstract events. They themselves do not inherit any numerical properties. But we can use random variable X to assign number to these events one by one. The only condition is that they have to be different numbers to discriminate the two events. For example:

|

$latex X(H) = 1, X(T) = 0 $ |

(1) |

Are these numbers 0 and 1 meaningful in absolute values? No. We can assign just any other numbers such as $latex X(H) = 125, X(T) = -2.312 $ without changing the conclusions. Of course, the first pair of numbers are going to be easily handled. So we stick to the first pair.

So when we say, “a coin toss”, it is a random variable. But when we toss the coin and see the outcome that becomes an observed outcome or data. Mathematics turns the abstract outcome or data into numbers. We use these numbers in many different ways to learn about the abstract reality. In this sense, numbers or mathematics are not the end but only means.

Gross Domestic Product (GDP)

Now let us turn to a bit of economics, a dismal science! What is GDP from this perspective? Economic systems are one of the most complex natural structures imaginable. Millions of biological units (humans) are interacting with each other to generate this system.

Nepal is a country and its economic system is very complex. One way to understand it would be to observe how much is produced every year in this country. In abstract terms, millions of different things are produced in various quantities. This basket of products is the true picture of reality. But, can we fathom it?

When we say GDP of Nepal for 2014 (a coin toss!), it is a random variable. Once we look at the basket it becomes an event/outcome (H or T). If we use the formula (function) of GDP to get a numerical value, we call it data (US$ 19 billion). So GDP converted an abstract economic event into a number that we can feed into our computers.

Hence if we observe 60 years worth of GDP per capita of Nepal, there are 60 random variables at work converting each of these 60 years of economic activities into 60 different numbers

Note: Guess what these two vertical lines are in the figure. I will try to discuss about them in detail in some future posts.

Similarly inflation can be another random variable or another way to assign numerical value to an economic event.

More examples of random variable:

- Number of members in a family.

- Number of disabled people in a district.

- Number of dots in the top face of a dice.

- Number of tigers in Chitwan National Park in a given year.

- Treasury bill rate

- Stock index

- Age of a student in a class

Practice: Think about the possible events/outcomes in each of these cases. How do we move from the world of abstract events to the world of numbers?

All these are random variables before we observe a particular outcome. When tossed, each of these random variables gives rise to a number representing some abstract events of nature.

Notation: We denote a random variable by capital letters like X, Y and Z and their particular outcomes by lower case letters like x, y and z.

We should never forget that behind each of these outcomes or random variables, there are abstract events of reality. And again, random variables too are not the end but means to learn about these realities.

Stochastic Process

Just random variables are not able to capture the sequence of events, be it inter-temporal or intra-temporal. In other words, we did not care much about the order of events while tossing the coin. The first toss was not much different from the second toss. But in reality, the order of the tossing matters. For example, it matters whether GDP is of year 2014 or 2015. It matters whether we are talking about the age of female students or male students.

So let us introduce ordering (index $latex t $) into the concept of random variable as a subscript: $latex \{X_t, t= 1,2,3,…\} $. This ordered sequence of random variables is called a Stochastic Process. Note that stochastic process itself is an infinite sequence carrying infinitely many potential events. But when we observe a particular realization of this process, it is always finite in size: $latex \{x_t, t=1,2,3,…,T\} $ and we call it data or observation.

Now we can view the last 60 years of GDP data of Nepal as a finite realization of the stochastic process $latex \{GDP_t, t = 1, 2, 3, …\} $. If we could re-run the history of Nepal, we would get completely different sequence of data from the same stochastic process.

From a traditional statistical perspective, stochastic process can be viewed as population and its finite realization can be viewed as sample data. So we can apply all the principles of sample versus population even if we are dealing with census data or time series data where the idea of population is very murky.

Examples with R

Example 1: Suppose $latex X_t, t=1,2,3,…\} $ represents a sequence of coin tosses. Each toss can give rise to either H or T and these events are transformed into numbers (1 and 0) using equation (1).

How difficult is it to toss the coin once? Very easy. What about 20 times? We may manage to have 20 coins and toss them at once or in order. But what if we want to toss them 100 or even 1000 times. It becomes very difficult, yes!

Fortunately, we have very good computers and programming language like R. It is free and open source. You can get it here:

https://cran.r-project.org/bin/windows/base/

Now let us toss a coin 100 times in R and see the outcomes. Use the command

rbinom(100,1,0.5)

We get output in R like this.

> rbinom(100,1,0.5)

[1] 0 0 0 1 0 0 0 0 1 1 0 1 1 0 0 0 0 1 1 1 0 1 1 0 1 1 1 1 1 0 0 0 0 1

[35] 0 0 0 0 1 1 0 1 1 0 1 0 0 0 0 1 0 1 1 0 0 1 0 0 1 0 0 0 1 1 0 0 1 0

[69] 0 1 0 1 1 0 0 0 1 1 1 1 0 0 0 0 0 0 1 1 0 1 1 1 0 1 1 0 1 0 0 0

>

We can easily toss a coin a million times in R. Now we want to look at the distribution of the outcomes to learn something about the reality: nature of the coin tossed. Easiest way to get the picture of distribution from observations is to draw the histogram of the observations. Use the command

> x <- rbinom(100,1,0.5)

> hist(x)

to get this

It seems the coin is pretty much unbiased, isn’t it?

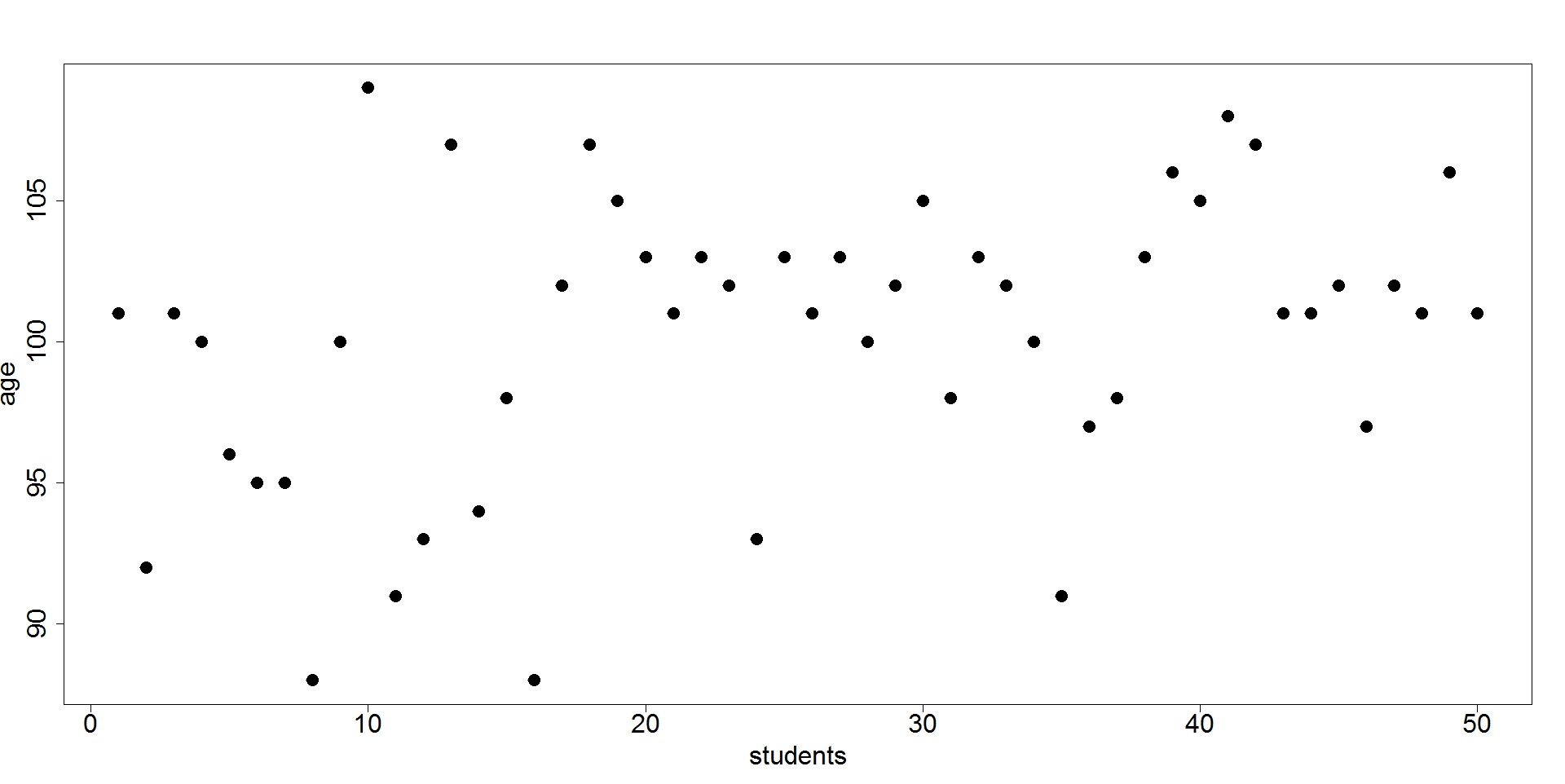

Now suppose we have a class with 50 students and we want to learn about their age (in months). So we collect the data on their age. Now how do we view this data from the perspective of stochastic process. We assume that there is a random variable $latex Age_t $, which when tossed will produce the age of those 50 students.

Let us play the god game now. Suppose we generate the age of these students using our computers. Should not they look like this

This article is becoming too long! I will talk more about stochastic process in future.

(In the next post, I will talk about statistical and mathematical models and their adequacy in explaining the data. This will be a mix of philosophy and mathematics. We will also learn about meaning of a good model.)

Niraj Poudyal, PhD